Best-performing Web Scraping Proxies for all your Projects

Level up your Web Scraping business options without dealing with captchas.

- large-scale scraping possible

- Avoid IP bans

- Access region-specific content

- Improved security

- Unlimited Bandwidth

Static IPv6 Plans

- 3500 IPv6 Proxies

- HTTPS Protocol Support

- Data Center Proxies

- single ip per /64 subnet

- user/pass or IP Auth

- No Ipv4 Leak

- No DNS Leak

- Delivery Time < 2 hours!

- 4000 IPv6 Proxies

- HTTPS Protocol Support

- Residential Proxies

- single ip per /64 subnet

- user/pass or IP Auth

- No Ipv4 Leak

- No DNS Leak

- Delivery Time < 2 hours!

- 60000 IPv6 Proxies

- HTTPS Protocol Support

- Data Center Proxies

- single ip per /48 subnet

- user/pass or IP Auth

- No Ipv4 Leak

- No DNS Leak

- Delivery Time < 2 hours!

- 60000 IPv6 Proxies

- HTTPS Protocol Support

- Residential Proxies

- single ip per /48 subnet

- user/pass or IP Auth

- No Ipv4 Leak

- No DNS Leak

- Delivery Time < 2 hours!

Rotated IPv6 Plans

- 1 Million IP Pool

- Residential Proxies

- 1 single proxy per /48

- New IP each request

- HTTPS Protocol Support

- user/pass or IP Auth

- No Ipv4 Leak

- No DNS Leak

- Delivery Time < 2 hours!

- 4 Million IP Pool

- Residential Proxies

- 1 single proxy per /48

- New IP each request

- HTTPS Protocol Support.

- user/pass or IP Auth

- No Ipv4 Leak

- No DNS Leak

- Delivery Time < 2 hours!

- 1 Million IP Pool

- Datacenter Proxies

- 1 single proxy per /48

- New IP each request

- HTTPS Protocol Support.

- user/pass or IP Auth

- No Ipv4 Leak

- No DNS Leak

- Delivery Time < 2 hours!

- 4 Million IP Pool

- Datacenter Proxies

- 1 single proxy per /48

- New IP each request

- HTTPS Protocol Support.

- user/pass or IP Auth

- No Ipv4 Leak

- No DNS Leak

- Delivery Time < 2 hours!

Our Features

Residential/Data Center

Stay 100% anonymous and use only real IP addresses provided by real Internet service providers and take advantage of the cleanest proxy pools on the market. Zero bans, penalties, or captchas.

Unlimited Bandwidth

To deliver maximum efficiency to our customers, we have based our servers on 10/40Gbps speed networks that do not employ throttling or bandwidth limits.

HTTPs/SOCKS5 Support

We Support HTTPs/SOCKS5 Protocols over IPv4 and IPv6 plans to provide the best benefits for your business.

Static Proxies Plans

Direct ISP connectivity provides 24/7 IP availability. Keep your web sessions for as long as you need with Static Residential IPs.

Expert Support Team

With our friendly support Team working 24/7, get instant access to your purchased packs with the live support provided by our engineers!

Highly Anonymous

Stay 100% anonymous and use only real IP addresses provided by real Internet service providers and take advantage of the cleanest proxy pools on the market. Zero bans, penalties, or captchas.

Dual Authentication

We offer our clients the choice whether to have user/pass authentication or IP address authentication to provide the most benefit for their business!

Optional Monthly Rotation

We offer our clients the ability to replace their IPs and access a new IP pool after each monthly bill cycle!

Rotated Proxies Plans

Our rotated plans rotates proxies per each browser session by default to give you the most benefits of rotated proxies!

Proxies for all your needs

Our dedicated proxies are ideal for Social Networks, Rank Tracking, Game sites, Captcha Solving, Online Shopping, Sneaker copping, and many other purposes...

We Provide Custom Packs

Join Our Newsletter

Signup to receive email updates on new services, Special promotions, tech tips and more.

Web scraping is the process of extracting data from websites automatically. It is a powerful tool that can be used for a variety of purposes, such as market research, competitive analysis, and data mining. However, web scraping can also be disruptive to websites, and many websites have implemented measures to prevent it. That’s where web scraping proxies come as a savior.

As we know web scraping proxies can help you overcome these challenges, we are here to help. This article is here to give you all you need to know about this interesting topic in a simplified language that fits beginners and professionals.

What are web scraping proxies?

A web scraping proxy is a server that sits between your computer and the website you are scraping. It routes your requests through the proxy server, which hides your IP address and makes it appear as if you are coming from a different location.

Benefits of using proxies for web scraping

When you use a proxy service like what V6proxies offer, you get these benefits:

- Anonymity: Web scraping proxies can help you hide your IP address and make it more difficult for websites to track your scraping activities.

- Avoid IP bans: Many websites ban IP addresses that are found to be scraping their data. Web scraping proxies can help you avoid IP bans by rotating through a pool of different IP addresses.

- Access to region-specific content: Some websites restrict access to their content based on the user’s location. Web scraping proxies can help you access region-specific content by using IP addresses from different countries.

- Increased scraping efficiency: By making multiple requests simultaneously and bypassing rate limits, proxies increase your web scraping efficiency to the roof.

- High-Volume Scraping: Detecting web scraping activities is difficult, but when scrapers become more active, they become easier to identify. Proxies allow you to be active and scrape high volume of data and avoid detection.

How do web scraping proxies work?

Here’s a step-by-step explanation of how proxies enable web scraping:

1. Proxy Configuration

You set up your web scraping tool or script to route its internet requests through a proxy server. You specify the proxy server’s IP address and port in your scraping tool’s settings.

2. Proxy server receives your request

When your scraping tool sends a request to a target website, it first goes to the proxy server rather than directly to the website.

3. Proxy server processes the request

The proxy server receives the request and acts on it according to its configuration and purpose. It may perform tasks like altering the request, managing IP rotation, or handling security measures.

4. Proxy server forwards request to destination

After processing the request, if necessary, the proxy server forwards it to the actual destination server or website on your behalf.

The destination server processes the request, believing it’s coming from the proxy server.

5. Proxy handles destination site response

The destination server sends a response back to the proxy server as if the proxy made the request.

6. You get your data

The proxy server then relays the response back to your web scraping tool.

Web scraping proxy types

Residential Proxies:

- Provided by Internet Service Providers (ISPs) to regular users and homes.

- Mimic real users, so it’s less likely to be detected and blocked.

- Suitable for heavily guarded websites like e-commerce platforms and social media networks.

ISP Proxies:

- Originate from data centers operated by internet service providers.

- Offer stability, reliability, faster speeds, and lower latency.

- Ideal for high-speed data extraction on platforms like streaming services and gaming websites.

Mobile Proxies:

- Use IP addresses assigned to devices by cellular network providers.

- IP addresses change frequently due to user mobility.

- Effective for websites with strong anti-bot measures, such as social media and app stores.

Data Center Proxies:

- Provided by data centers, not residential ISPs.

- Typically cheaper and faster than residential proxies.

- Suitable for sites with lower security like blogs, forums, and public databases.

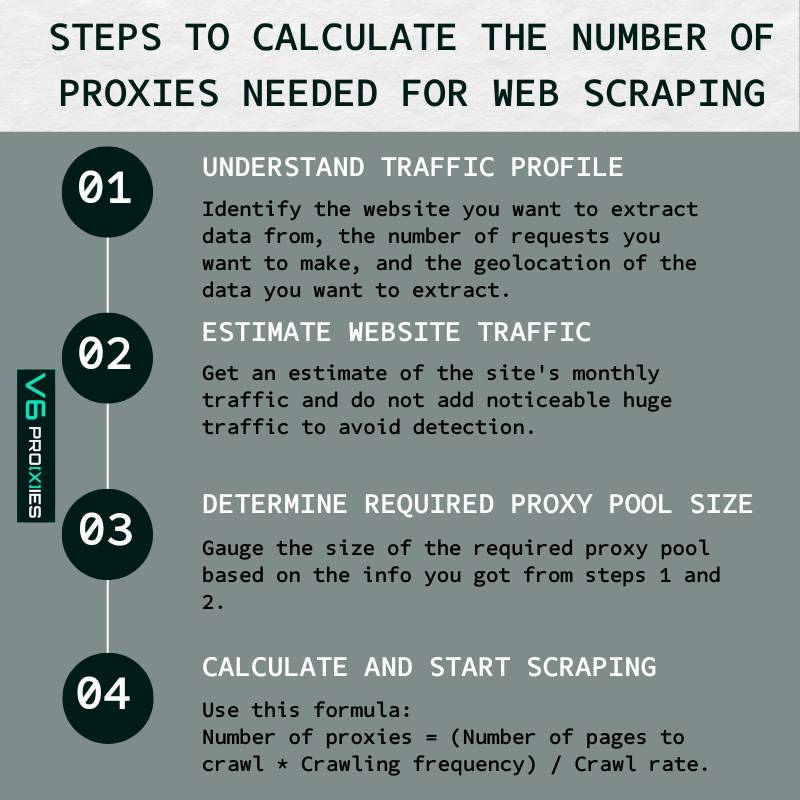

How many proxies do web scraping need?

Determining the number of proxies required for a web data extraction project can be complex. It’s not a one-size-fits-all answer. Here’s a breakdown to help you understand:

Understanding Traffic Profile

A ‘traffic profile’ describes your web data extraction needs.

This profile has three main factors:

- Website: The publicly accessible site you intend to extract data from.

- Request Volume: The number of requests and how frequently you aim to make them.

- Geolocation: This pertains to extracting data from a site based on a specific country or region. Some sites might display varied content depending on your location, like different currencies or shipping information.

Estimating Website Traffic

It’s essential to consider the site’s existing traffic. For instance, if a site has 100K visitors per month and you plan to make an additional 100K requests via proxies, this is problematic.

But, if a site has 100 million visits daily, making an additional 100K requests is feasible.

So, estimating the site’s traffic helps set realistic expectations from your proxy solutions.

Determining Proxy Pool Size

Once you’re clear about the website, request volume, and any geolocation specifics, you can start to gauge the size of the required proxy pool.

You can do the calculation through this formula:

Number of proxies = (Number of pages to crawl * Crawling frequency) / Crawl rate.

What does a reverse proxy do for web scraping?

A reverse proxy, particularly in the context of larger websites or web applications, can serve as a defense mechanism against web scraping. Here’s how:

- Masking the Real Server: By hiding the true server’s IP, a reverse proxy can make it more challenging for scrapers to target the actual data storage. Scrapers will see only the IP of the reverse proxy.

- Load Balancing: If a site is being heavily scraped, the reverse proxy can distribute these requests across several servers, minimizing the strain on any single server.

- Security Measures: Reverse proxies can detect and block suspicious activities, which includes repetitive requests from web scrapers.

- Content Switching: If it detects scraping activities, a reverse proxy might direct the scraper to a different version of the site or serve outdated/cached information.

Start today: buy the best web scraping proxies

Tired of bot blocks ruining your web scraping projects? It’s time to unlock the full data mining potential of the web with V6proxies scraping-optimized proxies.

- Rotating IP Addresses: Our proxies rotate through thousands of IP addresses to avoid blocks from anti-scraping systems. It’s like having unlimited keys to access any site.

- Crawlers Welcome: Our proxies are configured to seamlessly work with popular scraping tools like Scrapy, Puppeteer, and Selenium, integrating in just a few clicks.

- Geo-Targeted Locations: Our proxies can target servers from specific countries and cities, letting you gather region-exclusive data.

- High Request Volumes: Want to scrape a million pages a day? Our proxies can handle even the most demanding scraping needs.

With V6proxies, you get the speciality tools to drill down to the data you really want. Let our scraping proxies do the heavy lifting while you reap the rewards.

Note: The average timeout to receive a response from a simple & fast website through the proxies is 300-700 milliseconds. If you are loading a big or slow website, please make sure to increase your timeout. Make sure you select the correct proxy protocol in your program

Conclusion

Proxies play a vital role in web scraping, ensuring anonymity, security, and efficient data collection. They enable businesses to access region-specific content, avoid IP bans, and maintain high-volume scraping operations. Choosing the right proxy type such as V6proxies and responsible usage are key to unlocking their full potential in a data-driven world.

Articles Related:

- Best 5 Proxies for web scraping

- The Difference Between Screen Scraping and Web Scraping

How To Scrape Google Search Results With Python (Script Examples)

- Web Scraping Cookies: A full Beginner’s Guide (2024)

- Scraping Instagram Data (Followers, Profiles & Hashtags)

- What Is IP Rate Limiting? An In-Depth Guide (2024)

- How Websites Prevent Web Scraping? [Anti-Scraping Tools]

- A Guide to Google Maps Scraping (2004 Edition)